Before you read this: what is documented, what is reconstructed, and what is inference

This article holds a distinction between what is documented, what is reconstructed from publicly available technical material, and what is inference. The distinction matters and is held throughout.

Documented: the existence of nameless videos on YouTube; the use of Unicode non-printing characters to satisfy the platform's non-empty-title requirement; the taia777 channel, the Internet Checkpoint phenomenon, the viewer behaviour of leaving dated comments as personal markers, and the channel's eventual removal under the Content Identification (Content ID) system; YouTube's own published descriptions of its two-stage recommendation architecture; the contemporaneous community lore as recorded on Reddit, on Persian-language forums, and on the dedicated Internet Checkpoint fan pages.

Reconstructed, well-supported by public technical material: the specific Unicode validation behaviour by which the title field accepts a Zero Width Space, a Braille Pattern Blank, or a Hangul Filler; the failure of input sanitisation to perform Normalisation Form Compatibility Composition (NFKC) before validation; the role of behavioural signals in replacing metadata priors at the candidate-generation stage of a recommender system. Each of these is observable on current systems or stated in published engineering work.

Inference: the framing of the nameless video as a beneficiary of a deliberate novelty surface in the platform's ranking model, sometimes described as a reverse filter bubble. This is a plausible reading of how diversification typically works in engagement-driven recommender systems, and is consistent with the behaviour observed around nameless content. It is not, as far as the public record goes, a reported feature of the platform's ranking model specifically. It is offered here as a way of thinking, not as a finding.

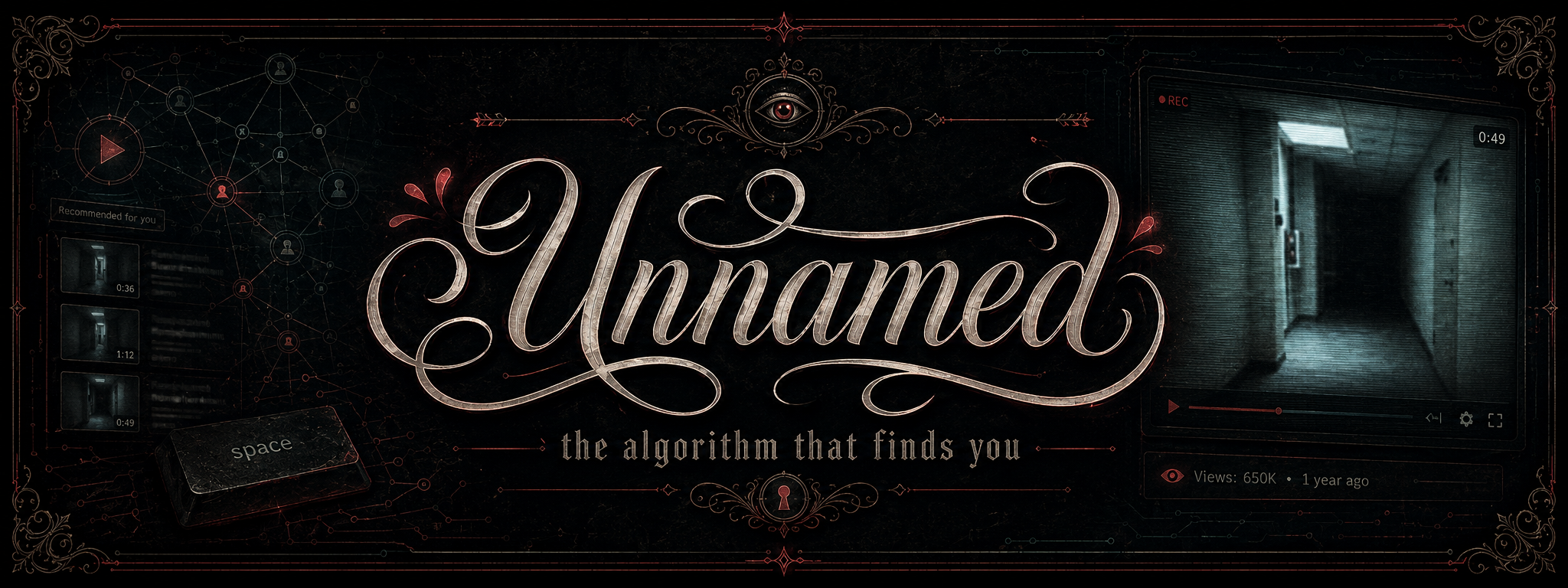

Story opening

The video had no title. The thumbnail showed a still from a Super Nintendo Entertainment System role-playing game, dusk light over a stone path. When you clicked on it, the soundtrack began to loop. Below the player, where the description should have sat, there was nothing. Where the tags should have been, nothing. The video had been on the platform for years. It had millions of views. Underneath the player, the comments arrived not in argument but in timestamps. 2019, I was 22, my mum was alive. Checkpoint, 2020, just lost my job. Returning here, 2021, things are okay. The video was a place. The place was operating as a public ledger of the years its viewers had lived through, alongside it.

The channel was called taia777. It uploaded nostalgic music from the 1990s. Most of its uploads had no name. The metadata field that the platform's interface presents to a creator as Title, required had been satisfied by a character that the database counted and the browser refused to draw. The video was named. The name was nothing.

Case file

The Internet Checkpoint phenomenon, as it came to be called, emerged in the late 2010s around a small handful of taia777 uploads and a few imitators that followed. The audio was usually a high-quality rip of music from the Squaresoft and Konami eras of console role-playing: Chrono Trigger, Final Fantasy VI, Secret of Mana. The thumbnails were often crops from the games themselves, sometimes from the box art, sometimes a still of an empty corridor or a save point. There was no description, no chapter list, no creator commentary in the comments. The community lore that grew up around the videos described them as liminal spaces, way stations, or digital campfires; somewhere a viewer could sit for a few minutes and notice themselves.

What made the videos algorithmically remarkable was not the lore. It was that the lore could find them. A platform that runs on metadata had built a recommendation engine confident enough to surface, to millions of users, a class of content that carried none. The taia777 channel was eventually removed for copyright reasons after Content ID matched the audio loops to the underlying soundtracks. For the years before that, the videos sat in the recommendation feed in unusually durable form. They were not viral in the conventional sense; they were perennial. They came back. The system kept finding them, even when the system had been given nothing to find them with.

The publicly observable parts of this, the videos and their comments, the taia777 channel and its takedown, and the platform architecture described in the company's own engineering publications, are documented. The mechanism that turned a metadata vacuum into a recommendation tailwind is reconstructed from what is known about the platform's two-stage candidate generation and ranking model, and from Unicode validation behaviour that is verifiable on any current browser. One framing in particular, set out in the technical breakdown below, is a plausible inference rather than a reported finding.

Technical breakdown

The character that satisfies the field

The YouTube Data Application Programming Interface (API) version 3 specifies that the title field on a video resource must be a non-empty string of at most one hundred characters. An attempt to upload a video with an empty title returns a 400 Bad Request error with the reason invalidTitle. The validation logic asks one question: is this string empty? The Unicode Standard provides a number of characters that allow a string to answer "no" to that question while drawing nothing on screen.

The three most commonly used are the Zero Width Space (U+200B), classified in the General Punctuation block and originally introduced to mark word boundaries in scripts like Thai and Japanese that do not use spaces; the Braille Pattern Blank (U+2800), defined as a Braille cell with no dots raised, used as a fixed-width space in Braille systems; and the Hangul Filler (U+3164), a Korean alignment glyph that several platforms render with no visible width. Each of these characters has a legitimate linguistic purpose. Each of them encodes, in the Unicode Transformation Format 8-bit (UTF-8) representation, as a multi-byte sequence that occupies positive space in the database and positive space in the JavaScript Object Notation (JSON) payload that travels from the client to the server. None of them draws anything in a typical web browser.

The uploader submits a request whose title field contains, for example, the single Braille Pattern Blank, encoded as the three bytes 0xE2 0xA0 0x80. The validator reads three bytes. The title is therefore not empty. The title is accepted. The title is stored. When the platform's web client subsequently retrieves the video resource, it asks the browser to render the character. The browser looks up U+2800 in the active font, finds the glyph for that code point defined as an empty two-by-four grid, and draws the empty grid. The viewer sees nothing where a title should be. The system has done everything it was asked to do.

What "length" means

The interesting failure mode here is that length is not one thing. It can mean any of several things, depending on which layer of the stack is asking the question. The byte length of a UTF-8 string is the number of bytes it occupies; the code point length is the number of unique Unicode positions it represents; the grapheme length is the number of visual units a human reader would recognise as characters. For the Zero Width Space, the byte length is three, the code point length is one, and the grapheme length is zero. For the Braille Pattern Blank, the byte length is three, the code point length is one, and the grapheme length is one, although the grapheme in question is blank. For the Hangul Filler, the same three-byte sequence renders as visible width on some platforms and as nothing on others.

A validator that checks byte length, or that strips standard American Standard Code for Information Interchange (ASCII) whitespace and nothing else, will accept all three of the common ghost glyphs without complaint. A validator that checks grapheme length, with a list of zero-width and blank-rendering categories held out, will reject them. The Unicode Standard also defines compatibility normalisation forms, the most consequential of which is Normalisation Form Compatibility Composition (NFKC), which collapses visually equivalent code points to a canonical representation. A title field that runs incoming strings through NFKC and rejects strings that normalise to whitespace, or to nothing, would not accept any of the ghost glyphs as a valid title. The persistence of nameless videos suggests that the validator does not do this; it suggests, in the more general sense, that input sanitisation on the field was specified against the wrong definition of length.

This is the place defenders should be looking. The Unicode Standard is large, deliberately so, and the characters that look blank are scattered across blocks designed for very different purposes. Filtering on a denylist of known ghost glyphs catches some of them; it does not catch the next one. The structural defence is to normalise input first, then validate the normalised result against semantic intent, not against a byte count. A title is meant to be readable. Readable is a grapheme-length-and-class question, not a bytes-on-disk question.

How the recommendation engine fills the gap

The platform has described its recommendation system, in published engineering work, as a two-stage funnel. The first stage, candidate generation, narrows a corpus of roughly eight hundred million videos to a few hundred plausible candidates for a given viewer. The second stage, ranking, scores those candidates and produces the order in which they appear. Candidate generation, in the standard case, draws on a combination of embedding retrieval, collaborative filtering, and metadata priors. The first two are behavioural. The third is linguistic.

For a video with a real title, real tags, and a real description, the metadata priors do useful work. The system can map the words in the title to a topic, place the video in a category, and offer it to users whose history suggests they have an interest in that category. For a video whose title contains a single Braille Pattern Blank, the metadata priors are unavailable. The system is forced to lean entirely on the behavioural signals.

The behavioural signals are sufficient. Once a small population of users has watched a nameless video to completion, the system learns that the video clusters, in the model's embedding space, near other videos those users watch. If those users also watch ambient music, lo-fi mixes, or video game soundtracks, the nameless video acquires, by association, an effective category label that no human ever supplied. The label does not appear in the database; it appears in the geometry of the model. The video is then recommended not because of what it is called but because of who else has stayed with it. Item-to-item collaborative filtering, the long-standing workhorse of recommender systems, was designed precisely for this kind of cold start. It does not require a description. It requires sustained attention from people who resemble other people.

The click-through paradox

Once the recommendation engine has surfaced a candidate, the ranking model scores it. Among the features the ranking model weighs heavily is the Click-Through Rate (CTR), the proportion of users who, on seeing the video in a feed, click on it. Conventional advice to creators is that strong titles and informative thumbnails increase CTR. Nameless videos invert this advice in an instructive way. The blank where the title should be does not reduce attention; it concentrates it. The viewer's eye, scanning a feed of labelled rectangles, registers the unlabelled rectangle as an anomaly. Anomalies attract clicks. Curiosity is a more reliable signal than information, and the system does not draw a distinction between the two.

Once clicked, the video performs well on the remaining metrics. The audio loops cleanly. There is no introduction to skip, no call to subscribe, no spoken voice that the viewer might dislike. Watch-time is long. Re-watch frequency is high. The ranking model, scoring the candidate, registers high CTR and high retention together and concludes that the candidate is high quality. The candidate is shown to more viewers. The pattern repeats.

This is not a hack against the algorithm. The algorithm has been asked to identify content that viewers click on and stay with, and it has identified content that viewers click on and stay with. The deterministic system is performing its function.

A plausible inference: the novelty surface

A further reading, which goes beyond what the platform has documented and should be treated as inference rather than fact, is that recommendation engines built to optimise engagement at scale benefit from occasionally surfacing content that has no clear topic affiliation. A user trapped in a strong taste cluster, a filter bubble, eventually exhausts the cluster's catalogue and disengages. The engagement-maximising response is to inject a small amount of novelty: content that does not belong to the cluster and may pull the user toward a new one. Nameless videos, lacking metadata, are well placed to serve as novelty injections. They have no category to argue against the user's existing taste. They show up as neutral.

This framing is consistent with how diversification is typically built into recommender systems and with the general behaviour observed around nameless content. It is not, as far as the public record goes, a reported feature of the platform's ranking model. It is offered here as a way of thinking about why a metadata vacuum was not a handicap, with the caveat that the strength of the framing is at the level of a plausible mechanism, not at the level of a documented design.

What this looks like to the security side of the building

The same input pathway that produces nameless videos produces every other Unicode-class abuse: blocklist evasion using Zero Width Space between the letters of a banned word, log obfuscation using non-printing characters in usernames that make log lines unsearchable, and homograph attacks that build visually identical user identifiers from different code points. The Internet Checkpoint is the benign and aesthetic version of this pattern. The hostile versions have been documented for years on every platform that has tried to enforce content rules on user-submitted text.

The defence is the same in every case: normalise before you validate, validate against semantic intent, and treat any input field that accepts free-form text as a Unicode-class surface, not an American Standard Code for Information Interchange (ASCII) surface. The lesson here is not specific to YouTube. YouTube is simply the platform on which the absence of this defence has produced the most photographed haunting.

Core lesson

The Internet Checkpoint did not exploit a bug. The validator did exactly what its specification required: it confirmed that a non-empty string had been provided. The recommender did exactly what its objective required: it identified content that satisfied the engagement metrics and surfaced it. Neither system asked, because neither system had been designed to ask, whether the string was meaningful or whether the engagement was the engagement the engineers had imagined when they wrote the objective. The architecture of the void is not the failure of the system. It is the system's specification, viewed from an angle the specification does not address.

An algorithm is indifferent to intent. It does not know that a title is supposed to identify a video. It knows that a title is a non-empty string of at most one hundred characters. It does not know that engagement is supposed to mean enjoyment. It knows that engagement is a number derived from clicks, watch-time, and retention. When a class of content arrives that satisfies the formal definitions without serving the underlying purposes, the system performs its function with no idea that the function and the purpose have parted ways. The taia777 videos were eventually identified, but they were identified by a separate system, Content ID, looking for a separate property: audio fingerprint matches against copyrighted recordings. The void was indexable from outside. It was not legible from inside.

That asymmetry, legible from outside and illegible from inside, is the part worth carrying away. A platform's own metrics are not capable, in general, of telling the platform what it is recommending. Other systems, with other objectives, can sometimes look in and notice. Most of the time, no one does. The void persists because the architecture of the system is exactly what the architecture of the system is, and what the architecture is, is a counter of bytes, not a reader of titles.

Glossary

The terms below cover the standards and components named in the technical breakdown. Each is explained in plain English; the precise behaviour is in the breakdown above.

- Unicode Standard

- An international character-encoding standard that assigns a unique numerical position, called a code point, to every character used in the world's writing systems and to a wide range of symbols and control sequences. The Unicode Standard is maintained by the Unicode Consortium.

- Unicode Transformation Format 8-bit (UTF-8)

- A variable-width encoding of the Unicode Standard in which each code point is represented by one to four bytes. UTF-8 is the dominant character encoding on the modern web.

- Code point

- A numerical position in the Unicode Standard, written in the form

U+XXXX. Each code point identifies one character, including invisible and formatting characters. - Grapheme cluster

- A unit of text that a human reader would perceive as a single character. A grapheme cluster may comprise one code point or several, and may render with positive width, zero width, or no glyph at all.

- Zero Width Space (ZWSP, U+200B)

- A non-printing character in the General Punctuation block, used to mark word boundaries in scripts that do not use spaces, and to control line-breaking behaviour in text engines.

- Braille Pattern Blank (U+2800)

- The Unicode character for a Braille cell with no dots raised. It is classified as a symbol, not as whitespace, and is treated by most fonts as a fixed-width blank.

- Hangul Filler (U+3164)

- A filler character used in Korean Hangul script to maintain alignment in character blocks. Several platforms render it as invisible, making it a frequent ingredient in nameless-name techniques.

- Unicode Normalisation Form Compatibility Composition (NFKC)

- A normalisation form that converts characters with the same visual representation but different technical meanings into a canonical form. Applied before validation, NFKC removes most of the room in which ghost glyphs can be smuggled past a title-or-name check.

- Input sanitisation

- The process of cleaning user-supplied input before validation and storage. Adequate sanitisation depends on having the right model of what "clean" means for the field in question, which for free-form text is a Unicode-class question.

- YouTube Data Application Programming Interface (API) version 3

- The public Application Programming Interface through which third-party clients and creators read and write video metadata on the platform.

- Two-stage recommendation architecture

- A common design for large-scale recommendation systems, comprising candidate generation, which narrows a vast corpus to a tractable shortlist for a given viewer, and ranking, which scores the shortlist and orders it for display.

- Item-to-item collaborative filtering

- A recommendation technique that surfaces items based on co-engagement patterns among users, rather than on the items' own descriptive metadata.

- Click-Through Rate (CTR)

- The proportion of impressions that result in a click. A primary feature in most ranking models, weighed alongside watch-time and retention.

- Content Identification (Content ID)

- A separate audio and video fingerprinting system used by the platform to detect uploads that match copyrighted material in a rights-holder catalogue. Content ID operates on the media itself, not on the metadata describing it.

Further reading

The following sources informed this article and have been verified.

- Internet Checkpoint, the community archive page.

- Reddit r/OutOfTheLoop, What is going on with these Japanese recommended music videos with no titles?

- Reddit r/Unicode, How to get blank name on YouTube?

- Reddit, I Found A YouTube Video Without A Title.

- YouTube, This Video Has NO TITLE With No Blank Characters.

- Unicode Explorer, U+2800 Braille Pattern Blank.

- Unicode Explorer, U+3164 Hangul Filler.

- Invisible Text Pro, Unicode characters reference.

- Wikipedia, Zero-width space.

- Seqrite, Homoglyph Attacks: How Lookalike Characters Fuel Cyber Deception, March 2026.

- Particular Audience, How YouTube's Recommendation System Works.

- Google Support, Good to know about recommendations for YouTube's recommendation system.

Suggested viewing

Farrell McGuire, March 2026. A walkthrough of the nameless-video phenomenon and its life inside the algorithm, fittingly uploaded with no rendered title of its own.

Source: Farrell McGuire on YouTube. Embedded via the privacy-enhanced youtube-nocookie domain.

Return to the case

The taia777 channel is gone. The mechanism is not. The byte sequence still passes the validator, the recommendation surface is still indifferent to titles, and the comment threads on the remaining imitator videos still operate as ledgers of the years their viewers have lived through. The architecture of the void is not specific to one channel or to one moment. It is a property of any system that defines a field as required, accepts any bytes that satisfy the requirement, and then judges what arrived by metrics that do not include the meaning of what arrived.

Counted, not read.